鉴于有时候白嫖的codex号总容易被封,这里有个比较简单的方法,起码1个月内基本省心无事:

1.免费时长:有30天Plus会员试用期

2.额度:日常使用完全够用

3.门槛:有PayPal账号即可(绑定国内储蓄卡也能用)

4.安全:按时取消订阅,不会被扣钱

步骤如下:

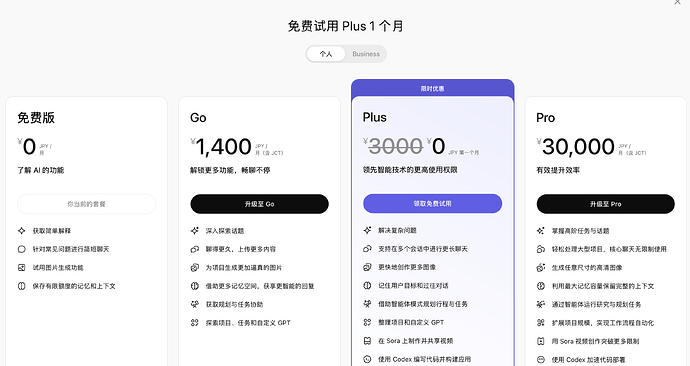

1.先打开chatgpt官网,访问付费定价页面:

支持以下账号登录:

谷歌账户 (Google)

苹果账户 (Apple ID)

微软账户 (Microsoft)

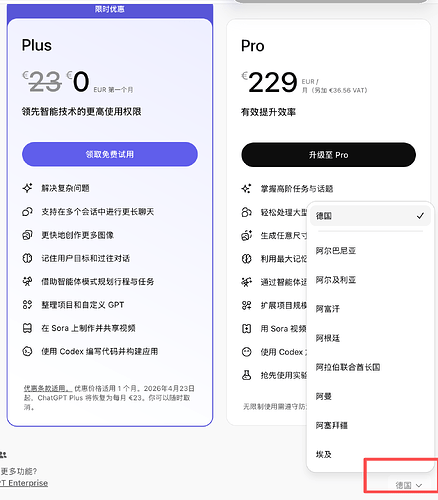

2.切换地区到德国

登录后,在页面右下角将地区切换为「德国」。

为什么选德国?因为德国区可以用paypal!

3:绑定PayPal 输入你的PayPal账号进行绑定。

PayPal绑定说明: 没有PayPal?免费注册一个 绑定任意 国内储蓄卡 ,你不怎么用的卡即可(亲测可用) 不需要信用卡!

回到GPT的页面填写账单地址。

注意账单地址填写的国家一定要跟自己的节点一致,而不是前面选的德国。

4:确认Plus会员状态 绑定成功后,页面会显示你已是 Plus 会员 。

5:重要!取消自动续费这一步非常重要! 绑定成功后立即操作:

如何取消订阅: 点击你的 头像 进入 设置 找到 账户 选项 点击 取消订阅 取消后,当前30天仍可正常使用,到期后自动停止,不会扣费!